On AI and Believability

Notes from a reunion with my calculus teacher.

“It’s as if this tsunami is coming at us and it’s so close we can see it on the horizon, and yet people are coming up with these explanations for, ‘oh it’s not actually a tsunami, that’s just a trick of the light.’”

— Dario Amodei

I recently caught up with my calculus teacher from high school. He was the longest-standing teacher at the school, teaching for fifty years in a small, midwestern town. In class he’d tell stories about former students who turned out to be a classmate’s parent — or occasionally a grandparent.

He is retired now. We talked about all that has changed since I graduated and how the school math team is doing. I told him about my job as a software engineer working in AI.

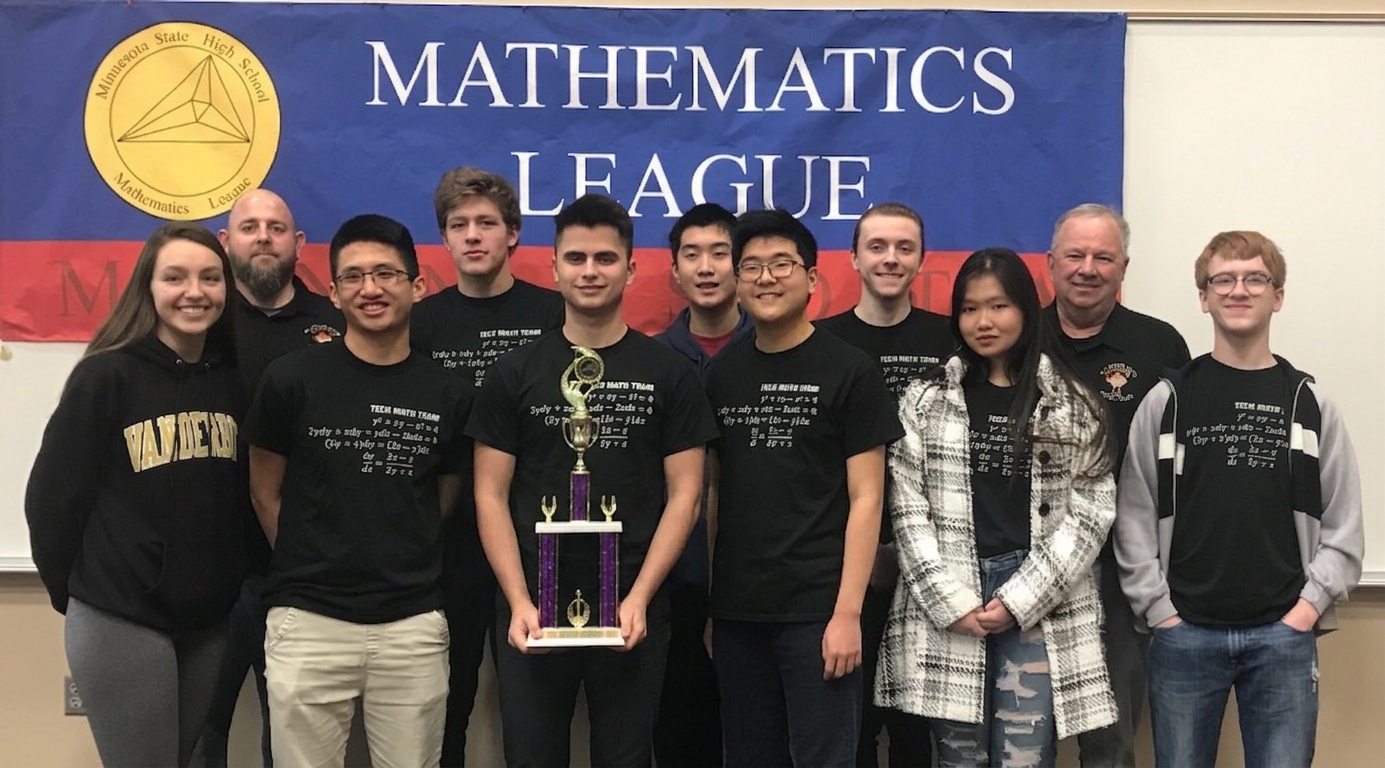

Math league state tournament. Mr. Boatz top right. (Me holding our 1st place trophy, hi!)

He has heard rumblings about AI over the last few years and largely viewed them with skepticism. But one day last summer, he opened the newspaper and read that DeepMind had scored gold at the International Mathematical Olympiad (IMO). You have to understand this is a person who has dedicated his entire life to teaching calculus and coaching competition math, seeing students from the AMC 10, AMC 12, and AIME through to USAMO. He is deeply familiar with these problems, and he was deeply unsettled by this news. He tried to disable the AI features on his phone after reading it.

For most people, hearing an AI model solved some hard math problems does not mean a whole lot. They have encountered hard math problems before in their life: for many, they will think of the hardest math test they took in high school, perhaps the SAT or ACT math sections.

What is missing is an appreciation for how many levels there are to this. And at each level, the people at the level above seem like geniuses. As someone who qualified to take the AIME from the AMC 12, I can say it felt like anyone who has qualified for USAMO must be a genius. The AIME problems involved such clever thinking and reasoning, such sheer, genuine intelligence, it is hard for me to wrap my mind around what it would feel like even to make it to USAMO.

From there — and I only ever knew of two people in the state making it that far — there is another leap in difficulty to qualify for the team representing the U.S. at the IMO. These problems are for most people inconceivably hard, requiring brilliant, highly creative, flexible, novel thinking under great time pressure. They cannot be gamed or brute forced. I would have confidently said before last year that being able to solve IMO problems is essentially what it means to be highly intelligent at general problem solving.

And AI won gold.

So if you are me, or you are Mr. Boatz, you are forced to conclude that the models are highly intelligent, at least in this area. There really is no way of avoiding that conclusion besides changing your definition of intelligence — that is, moving the goalposts to the ever-shrinking set of tasks the models cannot yet do. And yet, the people I know outside of the AI bubble, who are more reflective of the average person, are not at all impressed by AI. They use the free tier of ChatGPT to summarize a piece of text, ask for advice, or attempt some part of their job. And when it comes up short, they conclude it is a limited, unreliable, strange, albeit occasionally useful, tool. A tangential suspicion I have is that crypto (and all the baggage and grift that come along with it: meme coins, NFTs, pump-and-dump schemes) and AI live in the same head space for many people. They suspect AI is in a similar boom-and-bust cycle of questionable real-world value.

There is, of course, plenty of speculation, hype, and mania in AI: the astronomical valuations of revenue-less, product-less startups with nothing more than ideas, or the transition of failing companies from other, completely unrelated industries to AI infrastructure reminiscent of the dot-com bubble. But the core, steadily improving underlying capability of the models is a phenomenon which I think should be viewed in isolation from the hype cycles and financial speculation of the AI industry. Indeed, the average person’s appreciation for AI capability is beginning to improve with the release of mainstream, user-friendly applications like Claude Cowork, Claude for Excel, and Claude Design, targeting users from less technical domains.

If you are a software engineer, the growth in capability of the models has been “nothing short of staggering”. When you kick off an AI agent on a new project that would otherwise take weeks to build on your own, go have dinner, and come back to find it cleanly designed, implemented, and deployed — you are forced to reckon with their intelligence. Intelligence of a jagged and alien kind unlike our own. They read textbooks in seconds, understand virtually all human knowledge, solve extremely difficult problems with trivial ease. Yes, the models have surprising weaknesses. They make simple errors, lack taste, overengineer, hallucinate, miss context. Left to their own devices, coding agents will happily apply larger and larger bandaids to solve problems caused by previous bandaids, piling up technical debt until the codebase is completely unmanageable. But they are continuing to improve rapidly, as are the harnesses around them.

I think of the problem sets I spent hours on in college, learning to use bitwise operations to replicate logical circuits in C. Or of the class projects I spent weeks on — building chess from scratch in C++, with a rendering engine, sprites, board state, rules, and a heuristic-based minimax computer engine to play against. These are all trivial for today’s frontier models. What used to require human reasoning, problem solving, and creativity has been distilled down to forward passes through a transformer: statistically probable outcomes of matrix multiplication. Nothing more.

I thought AlphaGo was based on probability calculation, and that it was merely a machine. But when I saw this move, I changed my mind. Surely, AlphaGo is creative. This move was really creative and beautiful.

— Lee Sedol, on AlphaGo’s move 37

When you spend years learning a craft, you appreciate how hard it is, and how many levels of mastery there are to it. Only when that craft falls within the reach of today’s models can you fully appreciate how good they are. Mr. Boatz can because he spent fifty years practicing and teaching competition math. I can because I write software for a living, which now amounts to managing AI agents to do what used to be my day-to-day job. But if your work is still outside of their domain — grounded in the physical world, defined by in-person human interaction, requiring tacit knowledge, lacking rapid feedback loops, using software incompatible with text-based interfaces — the models haven’t really reached you yet. So it is understandable why talk of human-level intelligence, even in 2026, can sound unserious and fringe.

Mr. Boatz, now seventy-eight, understands the capability of AI better than many people my age. Not because he has used them much personally or knows how the models are trained, but because he understands competition math. The teaching he is loved for — thoughtful problem sets and exams handwritten in neat cursive, chalkboard lectures, dry humor, and a focus on intuition and understanding — is deeply, patiently human. And then one day, machines were doing it better than almost any person alive.

I don’t know what to tell people who haven’t felt that yet. But I think, for better or for worse, it is only a matter of time until they do.